allcombos-22-0-1.fsmI'm currently having a few problems

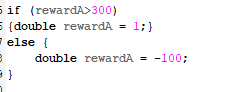

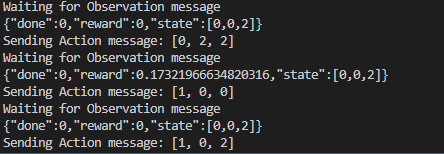

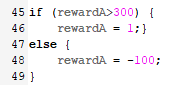

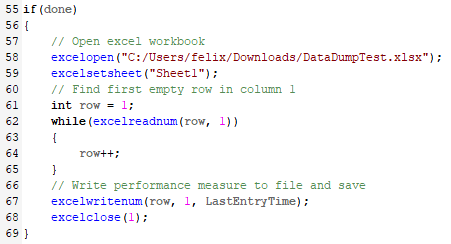

The first problem is that I want to have a penalty mechanism for my current reward function, but I observe that there is no negative part in my VISUAL CODE and the reward function I set should only be an integer. I don’t understand why this is the case .

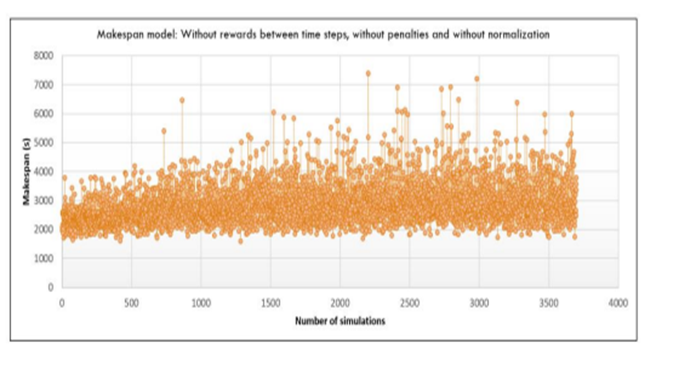

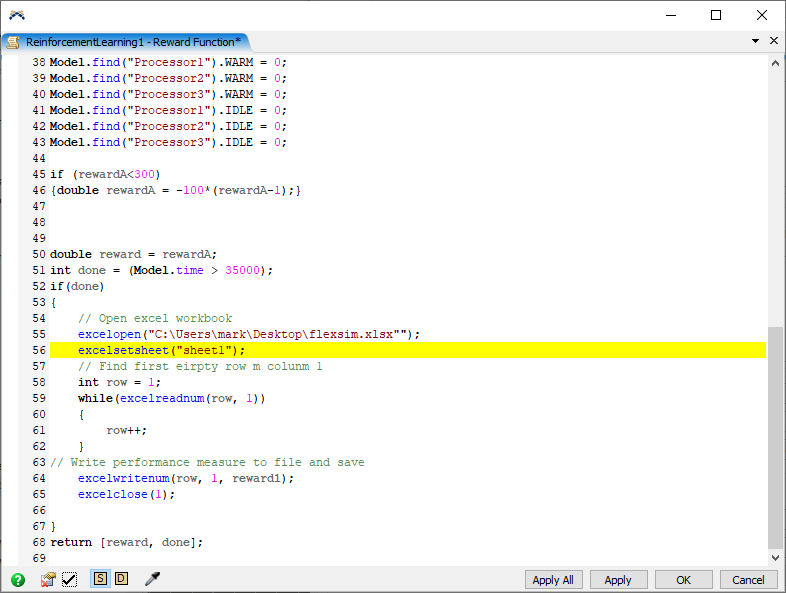

Also, I want to collect my training process into a graph. Is there a way to do it? Like the picture below (the source of the picture is from the Internet)