The reports and statistics allows an export of statistics like the stats_input of every object in the model. Unless I am mistaken, in order to do this within an experiment I need to add a performance measure for each object to get the same functionality. If I have 1000 objects, then that would be tedious when done manually. Basically the idea is to have the functionality to select a measure that is a performance measure for every object in the model, every object in a group, or every object in a class (I imagine the universal selector would be used). Allowing custom code to create custom statistics that are applied to each selected object would be a bonus.

Idea

Create a performance measure from statistic of every object in model, group, or class

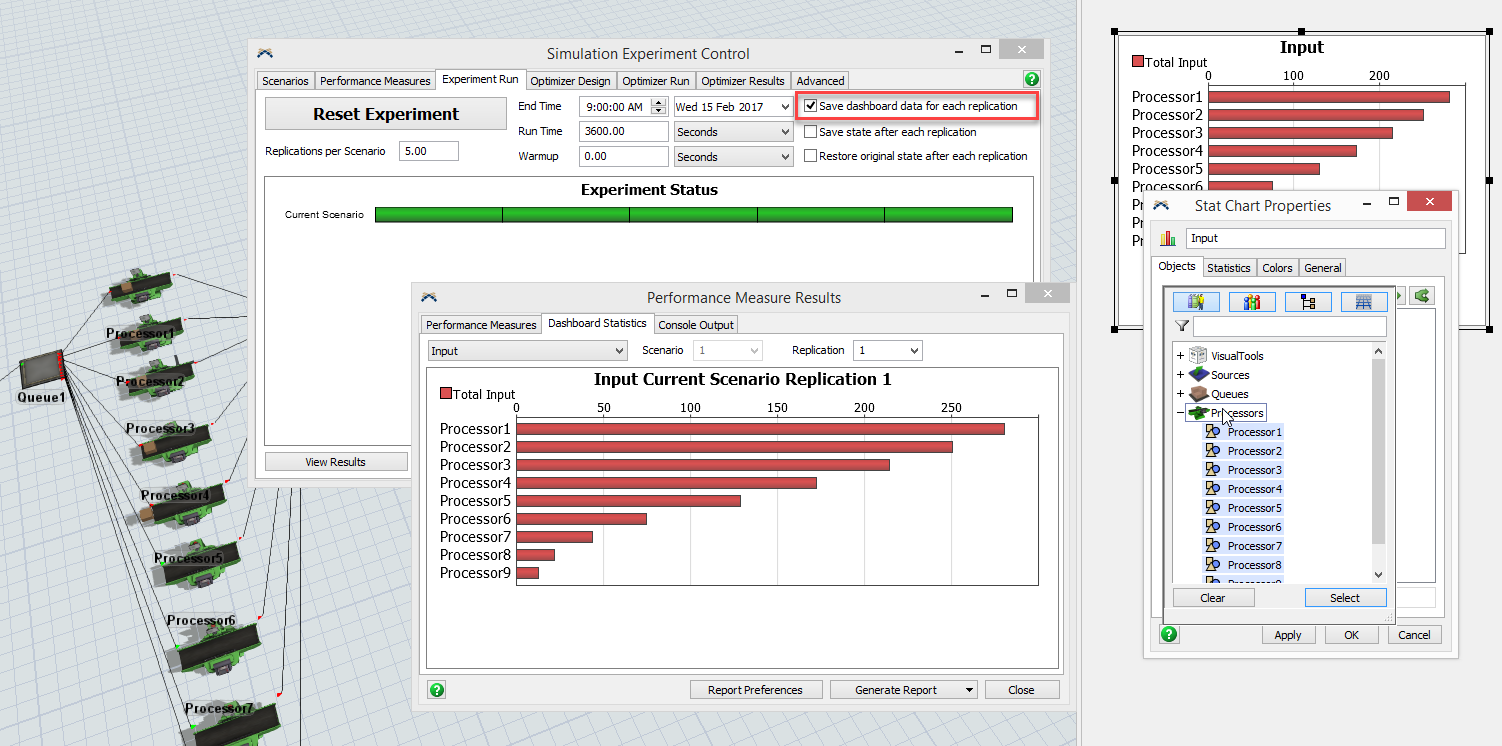

You can do this in the experimenter by adding a Dashboard that has those objects (easily added by class or group using the Dashboard Chart's Properties page) and then checking the "Save dashboard data for each replication" box on the Experiment Run tab.

You don't have to add a performance measure for each object to get that data.

You're right that I can get the data that way, but, unless I am mistaken, I can't see a summary of all the replications for each scenario for each object. For instance, the only way to see the boxplot of the input value for each replications for processor 1 across the experiment scenarios, and the same for processor 2, and the same for processor 3, etc is to manually add a performance measure for each object. It would be nice if I could more easily add a performance measure for the input of every object (or a group of objects), so that I can get the summary charting that is done for performance measures. Does that make sense?

Yes, but you can't do that with the Reports and Statistics functionality either.

The second half of your idea is a good suggestion, but it gets muddied by the first half that can already be done.

I've added your idea as a new item on the dev list. Thanks.

1 Comment

This also brings up a very interesting effect called the Bonferroni Inequality.

When you experiment, and you run replications of a model, you get a confidence interval, not an exact value. You are saying that you are 95% confident that the mean is within some range.

But if you do that with many measurements, then you have to begin worrying about your model confidence, namely, what are the odds that all measured means are within their confidence intervals? If you measure 10 statistics, each to a 95% confidence interval, then there is only a 60% chance that all means are within their confidence intervals. If you are measuring 100 statistics, then there is a 0.5% chance that all of your means are actually within their confidence intervals. Each one might be very certain, but a few are likely outside that bounds.

So as far as decision making goes, try to focus on a few key measurements, rather than many.

That is an interesting effect, and that is true for a 95% confidence interval with 100 stats. But a 99.9% confidence interval only has a 9.5% chance of a mean outside the confidence interval with 100 statistics, and a 99.99% confidence interval only has a 1% chance? So it is dependent on the confidence interval and the number of measures.

Maybe an example of when this would be useful would help.

Scenario:

- An AGV network with 5 pickup locations and 100 dropoff locations.

- Statistic that measures the average time from when an item is created to when it is dropped off at the destination location on each station (this statistic for each destination location is what I want to use for each performance measure).

- I have 10 different path structures that I want to test

So, if I capture the statistics and apply a very rigorous confidence interval, then I would be able to draw some conclusions on how one path structure would be advantageous/disadvantageous over another. It would seem to be disadvantageous to try and roll this into one performance measure as the unique performance of each station is important in deciding the overall best strategy. This data is possible to get now, but it has to be manually compiled to determine summary statistics and get all the raw data compiled in one chart, so I still think it would be useful to include this type of functionality.

Your Opinion Counts

Share your great idea, or help out by voting for other people's ideas.

Related Ideas

Performance Measure from Statistics Collector and Calculated Tables

Allow all PFM Names and Labels to be changed

Experimenter results sometimes do not display correctly in FlexSim 2017 (17.0.0)

Chinese characters show error in Performance Measure Results

Add PFM for combined utilization

Experimenter Statistics - Save Only Select Data

Experimenter execute scenarios before replications.

Enable scroll wheel in Parameters and Performance Measures tables

Allow Experimenter box plot columns to be rearranged using scenario order

Add "OnPrepareScenario" / "OnScenarioStart" trigger to Experimenter